What Shortens Lithium Battery Life in IoT Devices

author

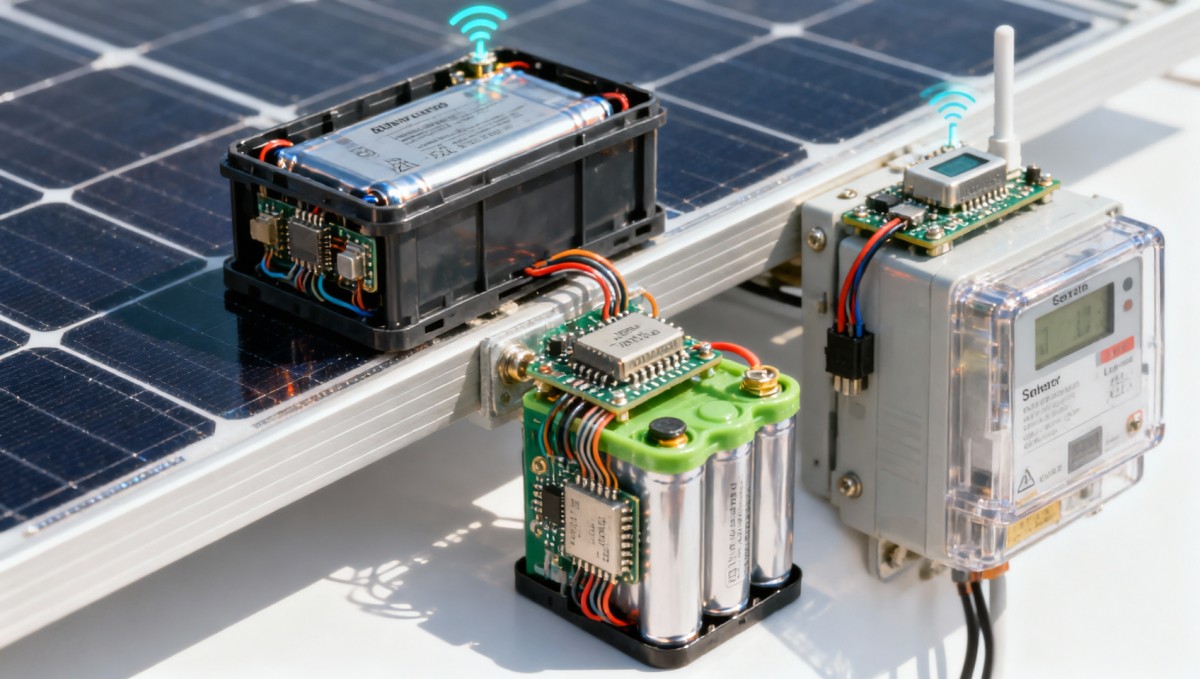

In renewable-energy IoT deployments, lithium battery for IoT performance often fails long before specifications suggest. At NexusHome Intelligence, we connect battery degradation to protocol latency benchmark results, Matter protocol data, Zigbee mesh capacity, and real smart home hardware testing—helping procurement teams, operators, and decision-makers separate marketing claims from IoT engineering truth.

For solar-powered monitoring nodes, HVAC controls in green buildings, smart meters, remote environmental sensors, and battery-backed safety devices, shorter-than-expected runtime is rarely caused by chemistry alone. In practice, battery life in IoT devices is reduced by a stack of interacting factors: radio retries, unstable mesh routes, poor temperature control, overspecified polling intervals, weak firmware design, and mismatched cell selection.

This matters across the renewable energy sector because every unnecessary truck roll, battery replacement cycle, and device outage raises operational cost and weakens the carbon-reduction case for connected infrastructure. Whether you are researching components, running field devices, sourcing for a multi-site project, or approving a building-wide deployment, understanding what actually drains lithium batteries is a procurement and engineering requirement, not just a maintenance detail.

Why lithium battery life falls short in renewable-energy IoT environments

In lab conditions, a lithium coin cell or primary lithium pack may appear capable of powering a sensor for 3 to 10 years. In the field, however, renewable-energy IoT devices often operate in harsher conditions: outdoor enclosures may swing from -20°C to 60°C, smart building nodes may face dense RF interference, and edge devices may transmit more often than the original power budget assumed. These variables compress battery life far below datasheet expectations.

A common mistake is treating battery life as a fixed specification rather than a system outcome. In solar sites, microgrids, and energy-management installations, runtime depends on at least 5 layers working together: cell chemistry, power regulation, radio protocol behavior, firmware duty cycle, and environmental stress. If one layer is poorly matched, a device designed for 60 months may fail in 18 to 24 months.

Protocol fragmentation adds another hidden burden. Zigbee, Thread, BLE, Matter over Thread, and proprietary sub-GHz approaches all have different overhead profiles. When a device experiences packet loss, route instability, or long join times, the battery pays the cost. A node that retries a transmission 4 times instead of 1 can multiply energy consumption during each reporting cycle, especially when wake windows are short and sleep control is inefficient.

In renewable-energy use cases, battery stress is often cyclical rather than constant. A smart meter may idle at low power for 23 hours, then spike during synchronization windows. A solar inverter accessory may communicate lightly in stable conditions but burst data every 5 to 15 seconds during grid events. These current peaks matter because lithium batteries age not only from total energy delivered, but also from repeated pulse loads, voltage sag, and thermal exposure.

The gap between datasheet life and deployed life

Manufacturers often quote battery life using low-duty-cycle assumptions such as one transmission every 30 or 60 minutes, a stable 20°C to 25°C environment, and ideal network conditions. Renewable-energy IoT deployments rarely stay inside those boundaries. Once sampling frequency doubles, LED indicators remain active, or a mesh route becomes unstable, the effective service life can decline by 20% to 50%.

The table below shows how typical deployment conditions in renewable-energy systems can diverge from test assumptions and shorten real battery life.

The main takeaway is that battery underperformance usually reflects a deployment mismatch, not a single defective part. For procurement teams, this means supplier evaluation must include protocol behavior, firmware maturity, and environmental test data rather than nominal battery capacity alone.

The biggest technical factors that shorten battery life

The most damaging battery-life killers in IoT devices are predictable once power is measured at the system level. At NHI, we repeatedly see 4 root causes: excessive communication overhead, poor sleep-state management, temperature-driven capacity loss, and bad component matching between battery, regulator, radio, and sensor load. These issues are especially relevant in renewable-energy deployments because devices are often distributed, maintenance-sensitive, and expected to operate for 24 to 84 months.

1. Communication overhead and retransmissions

Every radio event costs energy, but failed radio events cost much more. In crowded buildings with smart energy controls, Zigbee and Thread nodes may face interference from Wi-Fi, BLE beacons, and metal-rich infrastructure. If latency rises from 80 ms to 300 ms or a packet requires 2 to 5 retries, current draw accumulates fast. A device that should wake for 1 second may remain active for 4 or 6 seconds per cycle.

2. Poor duty-cycle design

Engineers sometimes oversample because more data feels safer. Yet for many renewable-energy sensors, sampling temperature every 10 seconds instead of every 5 minutes adds little value while multiplying battery drain. The same is true for aggressive status heartbeats. If a device reports noncritical data 144 times per day instead of 24 times, battery depletion can accelerate by 2 to 4 times depending on payload size and network quality.

3. Temperature and enclosure effects

Lithium cells dislike extremes. At low temperatures, internal resistance rises and voltage sag becomes more severe during transmission bursts. At high temperatures, self-discharge and chemical aging accelerate. A rooftop solar sensor enclosed in a poorly ventilated box can experience midday temperatures above 50°C, even if ambient air is lower. That shortens useful life and can also distort state-of-charge estimation.

4. Firmware, peripherals, and hidden standby loads

Battery drain is not only about radio time. LEDs, level shifters, always-on sensors, poorly tuned DC-DC converters, and background debug functions can quietly destroy runtime. A standby current difference of 15 µA versus 80 µA looks small, but over 36 months it becomes material. In devices intended for long-life renewable-energy monitoring, microamp-level leakage deserves the same attention as milliamp-level transmit current.

Practical warning signs during evaluation

- Battery life estimates are given without temperature range, transmission interval, or retry assumptions.

- Supplier documents mention “ultra-low power” but do not disclose sleep current, peak pulse current, or battery discharge curves.

- Protocol compatibility is claimed, yet there is no data on mesh density, join stability, or interference testing.

- Devices rely on coin cells even though payload bursts exceed what the cell can support without voltage sag.

These warning signs matter because in real projects the cost of replacing a battery-backed sensor can be far higher than the component itself. In remote solar assets or multi-building energy systems, service dispatch can exceed the battery cost by 10 times or more when labor, site access, and downtime are counted.

How protocol choice and network behavior affect lithium battery performance

Protocol selection directly shapes battery consumption. In renewable-energy IoT, the wrong choice can lock operators into frequent replacements, unstable telemetry, or both. Matter, Zigbee, Thread, BLE, and other low-power standards each have valid use cases, but their battery outcomes depend on topology, device role, packet frequency, and network maintenance behavior.

For example, a sleepy end device in a well-designed Zigbee network may perform efficiently for years. But once mesh congestion rises, parent routing becomes unstable, or install density exceeds practical limits, retries increase. Similarly, Matter over Thread can simplify interoperability, yet if the border router design is weak or the node role is poorly assigned, the expected low-power advantage may erode.

Battery life also depends on how quickly a device completes a communication task. Lower latency is not only a user experience metric; it is a power metric. If an uplink, acknowledgment, and return-to-sleep cycle finishes in 500 ms instead of 2.5 seconds, radio-on time drops dramatically. Across 10,000 cycles per month, those savings become operationally significant.

Protocol comparison for battery-sensitive renewable-energy IoT nodes

The table below summarizes practical battery considerations procurement teams should weigh when comparing common low-power protocols in energy and smart-building deployments.

The key conclusion is that protocol labels alone do not predict battery life. Buyers should ask for measured latency, retry rates, join stability, and current profiles under interference. In other words, “works with Matter” or “supports Zigbee mesh” is not enough without test conditions and power data tied to those claims.

What to request from suppliers

- A current-consumption profile showing sleep, wake, transmit, receive, and rejoin modes.

- Battery life assumptions tied to reporting interval, payload size, and temperature range.

- Mesh performance results at realistic node densities, such as 20, 50, or 100 nodes.

- Evidence of behavior under interference, packet loss, and border-router failover.

For enterprise decision-makers, these data points are far more useful than broad “years of battery life” claims because they translate directly into field maintenance budgets and replacement planning.

Battery selection, design controls, and procurement criteria that reduce failure

Choosing the right lithium battery for IoT devices in renewable-energy systems is not just about capacity in mAh. The battery must match pulse current, temperature profile, expected service interval, enclosure design, and communication pattern. A larger battery can still fail early if peak current is unsupported or if the firmware forces too many wake cycles.

For procurement teams, the practical goal is to move from capacity-based buying to application-based qualification. That means evaluating at least 6 criteria: chemistry fit, pulse capability, self-discharge, shelf life, temperature tolerance, and field replaceability. It also means checking whether the battery data were measured on the actual device PCB rather than in isolation.

Recommended evaluation framework

The table below provides a practical screening framework for battery-backed renewable-energy IoT devices before volume purchasing.

This framework helps separate engineered products from marketing-led products. If a vendor cannot explain how its battery-backed node behaves during cold starts, packet retries, or low-voltage conditions, the risk shifts directly to the buyer.

Implementation actions that usually improve runtime

- Increase transmission interval for noncritical telemetry from 1 minute to 5 or 15 minutes where operationally acceptable.

- Use event-driven reporting instead of constant polling for slow-changing values such as room temperature or ambient humidity.

- Validate mesh planning before rollout so devices are not forced into high-retry communication paths.

- Specify enclosure ventilation or thermal shielding for rooftop and cabinet installations exposed to solar heating.

- Require firmware power audits before final purchase approval, especially for products expected to remain installed for more than 36 months.

In many projects, these changes produce greater battery-life gains than switching to a nominally larger cell. Better design discipline usually beats bigger capacity when the problem is protocol inefficiency or hidden standby draw.

FAQ for operators, buyers, and decision-makers

How do I know whether a battery problem is caused by the cell or the network?

Start by comparing devices in similar thermal conditions but different network quality zones. If batteries drain faster in areas with weak signal, higher latency, or repeated rejoins, the problem is likely communication-related. Logging retry counts, wake duration, and voltage under transmit load over 2 to 4 weeks can usually reveal the root cause.

Are rechargeable lithium batteries always better for renewable-energy IoT?

Not always. Rechargeable lithium options can work well when paired with small solar harvesters or regular maintenance cycles, but they add charging management complexity and can degrade differently under heat. For low-duty remote sensors with multi-year service goals, primary lithium cells may still be the better fit if pulse demand and temperature range are properly managed.

What service-life target is realistic?

A realistic target depends on reporting frequency, protocol, and environment. In many renewable-energy IoT applications, 24 to 60 months is more credible than broad 10-year claims unless the payload is tiny, the network is highly stable, and the site temperature remains moderate. Decision-makers should ask for scenario-based life estimates rather than one headline number.

Which procurement documents matter most?

Ask for discharge curves, whole-device current profiles, protocol test results under interference, and firmware power-state descriptions. For large deployments, request a pilot phase of 30 to 90 days before full rollout. That short validation window can prevent years of replacement cost.

Short lithium battery life in IoT devices is usually the visible symptom of deeper engineering choices: protocol inefficiency, unstable mesh behavior, harsh temperature exposure, and incomplete power optimization. In renewable-energy systems, these issues carry a direct cost in maintenance, uptime, and lifecycle value.

NexusHome Intelligence evaluates smart home and IoT hardware through measurable data, not broad claims. By connecting battery degradation with protocol latency, mesh capacity, standby power, and real-world component behavior, we help research teams, operators, procurement managers, and enterprise leaders make decisions with fewer blind spots.

If you are comparing battery-backed IoT products for smart buildings, energy monitoring, HVAC automation, or distributed renewable-energy assets, contact NHI to discuss benchmarking criteria, product validation priorities, or a more tailored selection framework for your deployment.

Protocol_Architect

Dr. Thorne is a leading architect in IoT mesh protocols with 15+ years at NexusHome Intelligence. His research specializes in high-availability systems and sub-GHz propagation modeling.

Related Recommendations

- NHI Data Lab (Official Account)Battery Safety for IoT Hardware: What Gets Overlooked

- NHI Data Lab (Official Account)How to Compare IoT Batteries Beyond Rated Capacity

- NHI Data Lab (Official Account)What Shortens Lithium Battery Life in IoT Devices

- NHI Data Lab (Official Account)Lithium Battery for IoT: How to Choose the Right Pack

- NHI Data Lab (Official Account)Global Micro-LiFePO4 Button Battery Prices Drop 12% in April 2026

- NHI Data Lab (Official Account)Global LFP Microbattery Prices Drop 8.2% in April 2026

- NHI Data Lab (Official Account)How to avoid battery mismatch in low-power IoT

- NHI Data Lab (Official Account)Battery choices that reduce IoT field failures

- NHI Data Lab (Official Account)Lithium battery for IoT: what impacts runtime most?

- NHI Data Lab (Official Account)Best LiPo Battery for Wearables in 2026

Analyst