What a Smart Home Think Tank Can Do Beyond Reports

author

Beyond publishing reports, a smart home think tank turns fragmented claims into actionable evidence. At NexusHome Intelligence, our smart home compliance laboratory delivers IoT hardware benchmarking, Matter protocol data, and IoT supply chain metrics that help buyers identify verified IoT manufacturers and trusted smart home factories. For engineers, operators, and decision-makers, this is where IoT engineering truth replaces marketing noise.

Why does a smart home think tank matter in renewable energy operations?

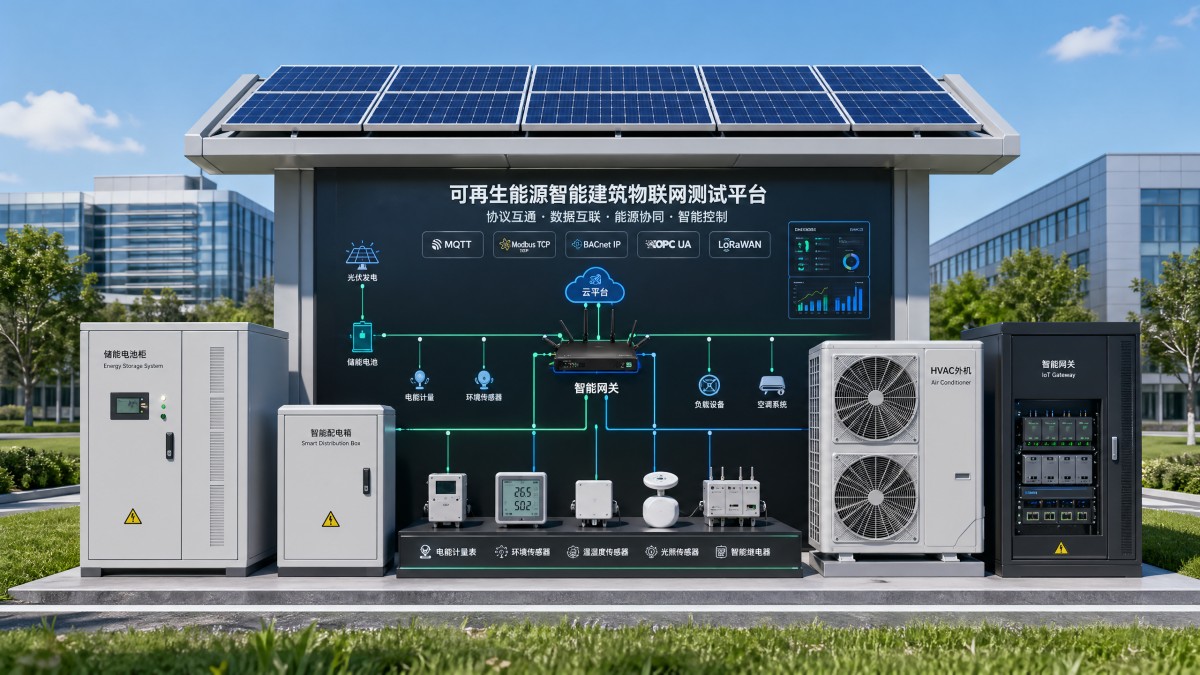

In renewable energy, connected devices do far more than automate homes. They influence energy visibility, HVAC coordination, battery storage behavior, demand response, access control, and equipment uptime across homes, campuses, mixed-use buildings, and distributed energy sites. When protocol claims are unclear, a small interoperability issue can ripple into delayed commissioning, unstable monitoring, and poor energy optimization over a 12–36 month operating cycle.

That is where a smart home think tank becomes operationally useful. Instead of repeating vendor language, it tests what happens when Matter, Thread, Zigbee, BLE, Wi-Fi, and gateway logic meet real electrical loads, dense wireless environments, and renewable energy control requirements. For buyers in solar-integrated buildings or electrified commercial properties, this evidence reduces the gap between a promising demo and a working deployment.

NexusHome Intelligence positions itself as an engineering filter between manufacturers and global buyers. In practical terms, this means benchmarking device behavior under interference, validating standby consumption, checking telemetry stability, and examining whether a product can support energy and climate-control logic without introducing hidden risk. In renewable energy applications, even a low-power relay or occupancy sensor can affect load shifting decisions, comfort targets, and reporting accuracy.

For information researchers, operators, procurement teams, and enterprise decision-makers, the value extends beyond reports. A capable think tank helps answer four immediate questions: which claims are testable, which devices scale beyond pilot volume, which factories are technically consistent, and which combinations support lower lifecycle risk in smart energy environments.

What problems does it solve for different stakeholders?

- Researchers gain a structured reference point for comparing protocols, power behavior, and manufacturer credibility without relying on scattered brochures.

- Operators gain clearer expectations for installation tolerance, network stability, firmware maintenance cycles, and data accuracy in daily use.

- Procurement teams gain measurable screening criteria across 3 core dimensions: protocol compliance, component consistency, and long-term energy performance.

- Decision-makers gain a basis for investment choices, especially when evaluating multi-site rollouts, OEM/ODM sourcing, or building-to-grid optimization projects.

Why renewable energy projects feel the pain more quickly

In a conventional consumer setup, a delayed response or unstable sensor may be annoying. In renewable energy environments, the same issue can distort occupancy-led HVAC schedules, battery charging priorities, or peak-load control windows measured in 15-minute intervals. This is why data-driven verification matters more in decarbonization projects than in ordinary gadget selection.

The more a project depends on electrification, local generation, and digital controls, the more valuable independent testing becomes. A smart home think tank is not just a content publisher. It becomes a decision support layer for energy-aware buildings and connected infrastructure.

Beyond reports: what practical functions should a smart home think tank provide?

A useful think tank should convert technical complexity into procurement-ready evidence. Reports alone are passive. Buyers in renewable energy and smart building programs need active validation tools: benchmark frameworks, scenario-based comparisons, supplier filtering logic, and interpretation support for protocol and power data. When these functions are missing, teams often overbuy features while underchecking reliability.

NHI’s manifesto points to five verification pillars, and each has direct value in energy-connected environments. Connectivity and protocol testing affects gateway selection and commissioning speed. Security and access validation affects site resilience and local processing requirements. Energy and climate control testing affects carbon reduction outcomes. Hardware component analysis affects failure rates. Wearable and health tech can also matter in elderly care, workforce safety, and occupancy-linked automation.

The most effective think tanks also support pre-purchase judgment. That means identifying whether a device is suitable for small pilot batches, medium-volume rollout, or large multi-site procurement. Typical buyer checkpoints include 5 key items: protocol stack maturity, firmware update path, standby consumption range, environmental tolerance, and factory process consistency.

In renewable energy projects, this work shortens the path from technical evaluation to implementation planning. Instead of asking only “Does it work?”, teams can ask “Does it work at scale, under interference, with energy controls, and within a 2–4 week validation window?” That is a much stronger buying question.

Core functions that create business value

- Protocol benchmarking that measures real latency, mesh behavior, and congestion tolerance rather than relying on label-based compatibility claims.

- Energy performance analysis that checks standby power, switching efficiency, monitoring precision, and controller response in climate-control use cases.

- Supply chain verification that distinguishes technically capable OEM/ODM partners from factories that market aggressively but document poorly.

- Decision translation that turns raw test data into sourcing, deployment, and risk-prioritization guidance for commercial teams.

How this differs from a generic B2B directory

A directory tells you who exists. A benchmark-driven think tank tells you which supplier claims survive technical scrutiny. For renewable energy buyers, that difference affects replacement rates, troubleshooting hours, and long-term operating costs. It also helps bridge the communication gap between engineering teams and procurement departments, which often evaluate value on different timelines.

This matters especially when sourcing from multiple regions. If one supplier promotes “low power” and another promotes “Matter ready,” the smart question is not which slogan sounds stronger. The smart question is which product produces stable field behavior in the actual control stack you plan to deploy.

Which benchmarks matter most for smart energy, HVAC, and distributed building control?

Not every benchmark has equal value in renewable energy. Teams usually need a short list of indicators that connect device behavior to energy outcomes. In mixed-use buildings, solar-enabled residences, or distributed asset environments, the most important metrics often sit at the intersection of connectivity, control responsiveness, and power efficiency.

Before comparing suppliers, it helps to separate “feature presence” from “system usefulness.” A relay with smart scheduling is not automatically suitable for peak-load management. A sensor with wireless support is not automatically fit for high-interference electrical rooms. A camera with AI processing is not automatically acceptable if local processing speed or privacy requirements are unclear.

The table below summarizes benchmark areas that procurement and engineering teams commonly review when evaluating devices for energy-aware buildings and renewable energy applications. These are not universal pass/fail thresholds; they are practical screening dimensions used to reduce technical ambiguity early in the sourcing cycle.

These metrics help buyers focus on operational relevance. For example, a device used in a building energy management layer should be reviewed over continuous operation windows such as 24–72 hours, not just brief showroom demonstrations. In many energy projects, real value appears only when testing covers congestion, temperature variation, and intermittent network stress.

Three benchmark groups NHI can turn into decisions

First, connectivity benchmarks clarify whether protocol claims survive real deployment. Second, energy and climate-control benchmarks reveal whether efficiency claims align with decarbonization goals. Third, component-level analysis shows whether a supplier can maintain consistency as orders move from sampling to scaled production. Together, these 3 groups provide a stronger sourcing basis than marketing collateral alone.

For renewable energy operators, this translation matters because device performance is cumulative. A slightly inefficient relay, a drifting sensor, and an unstable gateway can combine into meaningful energy loss and labor cost over a year of operation.

How should procurement teams compare suppliers and verified IoT manufacturers?

Procurement in smart energy systems often fails when teams compare brochures instead of evidence layers. The better approach is to compare suppliers across technical, operational, and supply chain dimensions. In many cases, a manufacturer with quieter branding but stronger test consistency is the lower-risk choice for a 6–18 month rollout.

A smart home think tank can make that comparison much more disciplined. Rather than asking suppliers to self-define quality, buyers can score them against shared criteria: protocol behavior, power performance, firmware governance, documentation depth, sampling responsiveness, and production repeatability. This is especially important for renewable energy projects where commissioning schedules and integration dependencies are tight.

The table below shows a practical comparison framework that procurement teams can use when shortlisting trusted smart home factories or verified IoT manufacturers for energy-linked applications. It is designed for pre-RFQ discussions, sample review, and cross-functional approval workflows.

This framework is useful because it separates product attractiveness from sourcing confidence. A supplier may offer a strong price or an impressive feature list, yet still create hidden cost through weak process control or limited validation. In renewable energy, those hidden costs often surface as site revisits, firmware workarounds, or delayed load optimization.

A 4-step procurement filter for B2B buyers

- Step 1: Define the operating scenario, such as apartment energy control, commercial HVAC coordination, or distributed access and occupancy logic.

- Step 2: Request benchmark-backed evidence for 3–5 priority indicators instead of broad presentations.

- Step 3: Validate sample behavior during a 7–15 day review window with engineering and operations involved together.

- Step 4: Approve supplier progression only after documentation, firmware path, and production consistency questions are answered.

Common comparison mistake

Many teams compare unit price before comparing operational fit. That is risky in renewable energy systems, where the true cost includes downtime, troubleshooting labor, calibration effort, and the performance impact of unreliable data. A think tank adds value by making those less visible costs easier to discuss early.

What standards, compliance, and implementation questions should buyers ask?

Compliance is rarely the headline topic in early sourcing calls, but it becomes critical once projects move toward deployment. For smart home and IoT hardware used in renewable energy contexts, buyers should ask not only whether a device references a standard, but how that standard affects implementation, data handling, maintenance, and cross-system compatibility.

Common areas of concern include protocol conformity, electrical safety expectations, local processing capability, privacy handling, and interoperability with building or energy management layers. In Europe and other regulated markets, data flow and local edge behavior may matter as much as sensor accuracy. In commercial buildings, integration readiness can influence handover timelines by weeks rather than days.

A benchmarking laboratory does not replace formal certification bodies, but it helps procurement teams interpret what documentation means in practice. For example, when a supplier claims support for Matter, Zigbee 3.0, or local edge processing, buyers still need implementation detail: under what topology, with what firmware assumptions, and with what practical limits?

This is particularly relevant in renewable energy because system value often depends on reliable coordination among meters, relays, gateways, climate controls, and occupancy or access devices. If any one layer underperforms, the energy strategy may look strong on paper and weak in operation.

Questions that should be asked before approval

- What protocol behavior has been tested in real multi-node or interference-heavy conditions, and what limits were observed?

- What is the expected firmware update process, including rollback support and maintenance responsibility over the first 12 months?

- For devices collecting usage or occupancy data, what local processing and privacy controls are available?

- What documentation package can be shared during evaluation, including datasheets, protocol notes, and integration guidance?

Implementation timeline expectations

For many B2B projects, a realistic path includes 3 stages: sample validation, integration review, and controlled rollout. Depending on device type and system complexity, the first two stages often take 2–6 weeks combined. A think tank helps reduce delay by clarifying issues before installation teams are mobilized.

That timing advantage matters in energy retrofit projects, seasonal HVAC planning, and pilot-to-scale programs where every week of uncertainty can delay savings capture or occupancy readiness.

FAQ: what do buyers and operators most often want to know?

The questions below reflect common search intent from researchers, operators, buyers, and executives evaluating smart home benchmarking for renewable energy and smart building use cases. They also show why a smart home think tank has value beyond whitepapers.

How do I know whether an IoT device is suitable for renewable energy projects?

Start with operational fit, not branding. Review whether the device supports stable communication, measurable standby performance, and useful integration behavior under realistic load and network conditions. For many projects, 3 checks are essential: protocol stability, power behavior, and documentation quality. If any of these are unclear, the device may still be usable, but project risk increases.

What should procurement teams prioritize during supplier selection?

Prioritize evidence that affects total project outcome: test-backed interoperability, repeatable hardware quality, and a workable support path. Price matters, but it should be reviewed alongside installation complexity, expected maintenance frequency, and field update readiness. In a scaled rollout, these factors often outweigh small unit-price differences.

Can a smart home think tank help with factory selection?

Yes, especially when it evaluates not only the product but also the technical discipline behind it. Supply chain metrics, component consistency analysis, and benchmark standardization can help identify trusted smart home factories and verified IoT manufacturers that are stronger in engineering than in marketing. That is valuable when sourcing from broad OEM/ODM networks.

How long should a practical evaluation take before purchase?

A focused initial review often fits within 7–15 days for a single product line if scope is controlled. Broader integration validation can extend to 2–4 weeks, particularly when gateways, energy monitoring, and HVAC logic all need to be checked together. Rushing below this range may save time upfront but increase downstream troubleshooting.

Why choose NHI when you need more than reports?

NexusHome Intelligence is built for buyers who need engineering truth rather than promotional language. Its value lies in independent benchmarking, protocol-level scrutiny, supply chain transparency, and the ability to translate technical findings into sourcing decisions for renewable energy and connected building programs. This is especially useful when your team must align engineering, procurement, and executive priorities within one buying process.

If you are comparing smart relays, sensors, gateways, locks, climate-control devices, or other IoT hardware for energy-aware buildings, NHI can help frame the right questions before you commit budget. That includes parameter confirmation, product selection logic, sample review priorities, expected delivery cycle discussion, documentation checkpoints, and suitability for pilot, medium-batch, or scaled deployment.

For procurement teams, the conversation can focus on verified IoT manufacturers, trusted smart home factories, and benchmark-backed shortlist creation. For operators, it can focus on commissioning risk, maintenance expectations, and data stability. For decision-makers, it can focus on lifecycle risk, rollout readiness, and whether a given device stack supports energy efficiency goals over the long term.

If you need support with protocol evaluation, supplier comparison, renewable energy use-case matching, certification-related questions, sample planning, or quote-stage technical clarification, contact NHI with your target scenario and device category. A sharper evaluation at the start usually saves far more time and cost than a correction after deployment.

Protocol_Architect

Dr. Thorne is a leading architect in IoT mesh protocols with 15+ years at NexusHome Intelligence. His research specializes in high-availability systems and sub-GHz propagation modeling.

Related Recommendations

- Dr. Aris ThorneWhat an IoT Supply Chain Index Actually Tells You

- Dr. Aris ThorneWhen an IoT OEM Compliance Roster Leaves Gaps

- Dr. Aris ThorneWhy Hardware Compliance Inquiries Stall So Often

- Dr. Aris ThorneWhen It Makes Sense to Contact NHI Analysts

- Dr. Aris ThorneSmart Home Hardware Testing Failures That Repeat

- Dr. Aris ThorneShould You Submit IoT Benchmark Data Early?

- Dr. Aris ThorneWhat Counts as a Real Hardware Testing Authority?

- Dr. Aris ThorneWhy IoT Engineering Truth Is Hard to Find

- Dr. Aris ThorneWhat a Smart Home Compliance Laboratory Checks First

- Dr. Aris ThorneCan an IoT Independent Think Tank Stay Neutral?

Analyst